Smartness

Published:

When I gradually started to understand the capabilities of LLMs and use them more and more, I started to consider it from the perspective of “smartness”. I consider myself to be enthusiastic about many things, with the backend of my smartness for being able to deal with a wide range of topics. While with the amazing capabilities of LLMs, nobody can be as erudite as them. So my phase one for thinking about smartness is—“is the smartness of most human-beings being useless?”

There was quite some time that I was in a state of “depression” about this, not to the level to be really depressed, but the unclear self-identity is definitely sth I care about. But when I start to consider how effective I am using LLMs, and how well-organised the chats are, I start to feel that my enthusiasm are actually a unique key to unlock the potential of LLMs. That’s the phase two, when I believe that “the smartness of human co-lives with the smartness of LLMs very well”.

I feel like stepping into phase three, after the recent reading on Cognitive Science. The masterpiece, [Thinking fast and slow] is really eye-opening. In case it might be more familiar to the reader, the well-known System 1&2 is the core of the book. System 1 is the fast, intuitive, and emotional part of our brain, while System 2 is the slow, deliberate, and logical part. There was a section letting me rethink of what is the smartness I was thinking about. Usually, the wrong feedback of the self-objective smartness comes from using my System 2 to analyse others’ behavior by System 1; if I’m going to judge the System 1 of myself, I might rate myself as far from smart. Moreover, the System 1 is actually dominating the behavior of human-beings because of the laziness of System 2, although being less reliable.

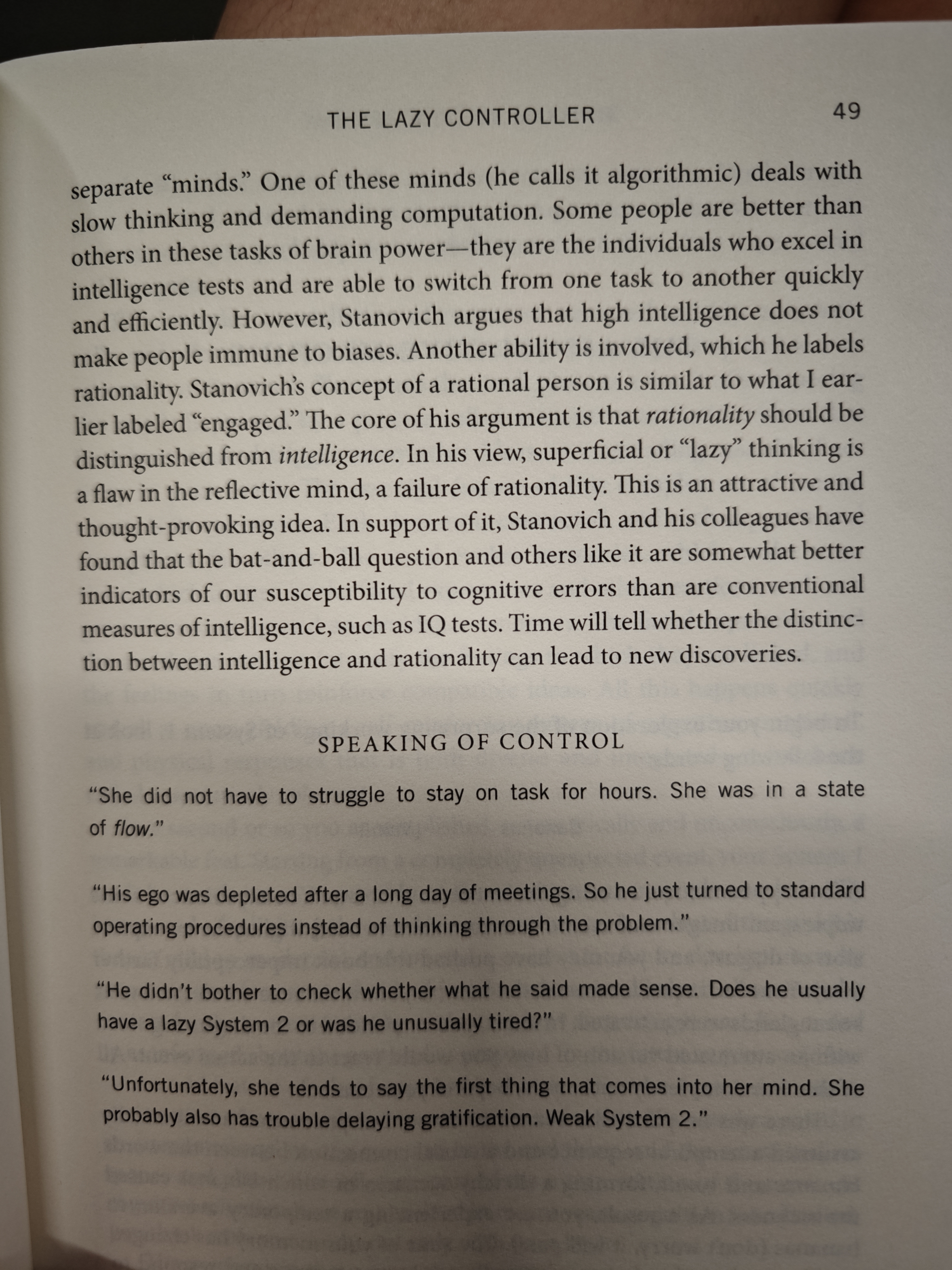

With my current understanding, I’m going to define the smartness (of human) in three aspects: (a) the capability of System 1, (b) the diligence of System 2, (c) the capability of System 2. There are more detailed stuffs across the systems (e.g., the intelligence vs. rationality as the picture shows). And you might have found sth: the System 1&2 are really analogous to the LLMs’ “fast” and “slow” thinking. When people are joking about how the fast mode can be so wrong, it’s very similar to how I judged others’ System 1-based behavior.

A more important point is about—what’s the refined smartness of human X LLMs? With the current status of LLMs, here is my personal understanding: LLM is a powerful complement to the System 2 of human-beings, while the System 2 of models is still beneficial from the System 2 of human-beings. Then there are two different views: person-wise, (a) of human is still important for the personal daily life, but from a global view, human’s (a) is too weak compared to the models; (b) is actually the most important part for the human smartness, because now if you’re enjoying thinking, the models are strong external brains for your System 2; and finally, the (c) of human and LLMs are working together to achieve amazing things: human’s domain knowledge guides the LLMs’ reasoning, and the comprehensive knowledge of LLMs supports human by addressing the shortcomings of human’s System 2.